Why Should You Trust an Algorithm—Let Alone Its Token?

Did you know most AI platforms still treat “trust” like a slogan, not a system? CAESAR thinks differently. Instead of asking you to believe, it invites you to audit — right down to the code commits and token flows. Let’s break down how that works and why it matters to you.

Meet $CAESAR: A Token With a Job Description

You’ve seen plenty of utility tokens. Few spell out why they exist in plain language. $CAESAR serves three concrete roles:

Proof of Skin-in-the-Game

• Every query you run stakes a micro-fee in $CAESAR.

• Bad output? You down-vote and reclaim part of that fee.

• Result: Researchers pay a cost for noisy prompts, and you get a cleaner knowledge base.

Reputation Ledger

• Helpful community reviewers earn $CAESAR, which doubles as a credibility score.

• The higher your balance, the more weight your model-feedback carries.

• Think of it as tradable Clout — but anchored in actual work.

Governance Key

• Major model upgrades and policy changes trigger on-chain votes.

• Holding tokens isn’t just speculative; it’s your admission ticket to steer the roadmap.

Actionable tip: Even if you never buy a coin, borrow this idea in your own project—link reputation directly to tangible contributions, not likes.

Tokenomics: Turning Loyalty Into a Measurable Asset

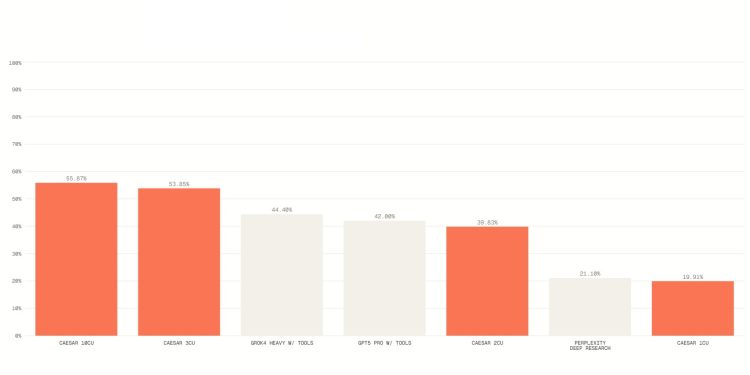

Still skeptical? Good—you should be. Here’s the practical math:

Fixed supply with a steady drip into reviewer rewards prevents runaway dilution.

Burn-on-bad-output nudges the system toward accuracy; no one likes paying for hallucinations.

Staking pools for domain experts (think oncology researchers or solidity auditors) let niche communities bootstrap their own QA loops.

Use case for you: If you run a DAO or dev community, experiment with a micro-burn when pull-requests fail CI checks. You’ll be shocked how quickly quality improves.

Transparency and Co-Ownership—Less Buzzword, More Blueprint

CAESAR publishes:

Model change-logs in plain English alongside the Git diffs.

Treasury dashboards showing every token movement—yes, down to the gas fee.

Ethical position papers that outline where they won’t deploy, like autonomous weapons.

Why does that matter to you? Because trust isn’t a press release; it’s continuous disclosure. If your current AI vendor hides upgrade notes behind marketing gloss, ask why.

Who’s Actually Using CAESAR?

You’ll find three core tribes:

- Web3 Builders testing smart-contract logic with CAESAR’s code-validation module.

- Academic Researchers crowdsourcing peer review and citing blockchain-verifiable sources.

- Policy Analysts who need auditable reasoning trails before they brief a minister or a board.

Real-world example: A climate-finance DAO recently used CAESAR to draft—and publicly verify—an impact disclosure. The entire community signed off on the wording and the math before funds moved.

The Bigger Question: What Does “Trust” Mean in Next-Gen AI?

Take a minute and answer for yourself:

Do you trust an AI because a big logo owns it?

Or because you can peek under the hood, challenge its logic, and even profit when you make it smarter?

CAESAR’s wager is that the second path scales better—and stays honest longer.

Your Move

Audit the Model: Visit caesar.xyz/explorer and scan a live inference trace.

Challenge the Tokenomics: Check the burn-and-earn dashboards; do the numbers add up?

Steal the Playbook: Even if you never touch $CAESAR, ask your current tools for the same transparency.

So, does CAESAR’s approach make sense to you? Take five minutes to poke around the explorer, and decide whether your definition of trust needs an upgrade.